Security tools promise safety. They promise control. They promise peace of mind. For non-technical teams, reality looks different. Tools feel complex. Alerts feel endless. Instead of reducing risk, some systems add it.

The Gap Between Tool Design and Real Users

Engineers build most security tools that are built for other engineers. Non-technical teams use them anyway. HR. Finance. Operations. This creates friction. The tools assume knowledge that users do not have. Mistakes follow. Security becomes fragile, not strong.

Alert Fatigue: When Warnings Stop Working

Alerts are meant to protect. Too many alerts cause problems. When everything feels urgent, nothing does. Teams stop reacting. They skim. They mute notifications. Real threats hide among noise.

Why Alerts Keep Increasing

Vendors add alerts to show value. More alerts look like more protection. Each new feature adds another signal. Rarely is anything removed. This creates overload. Attention breaks before systems do.

How Humans Respond to Constant Alerts

People adapt quickly. They ignore patterns. Repeated warnings lose meaning. Stress increases. Eventually, teams assume alerts are false. That assumption is dangerous.

Misconfiguration Is the Default State

Security tools require setup. A good setup requires expertise. Non-technical teams often guess. Or follow partial guides. Small choices create big gaps. Permissions too wide. Rules are too loose. The system looks secure. It is not.

False Confidence Is the Most Dangerous Outcome

Installed tools create comfort. Comfort reduces caution. Teams believe they are protected. They stop asking questions. Security becomes a checkbox. Not a process. This confidence is misplaced, and it is hard to challenge.

The same psychological pattern appears in other digital environments, including platforms where users bet online. When systems look secure and automated, people assume risks are already managed for them.

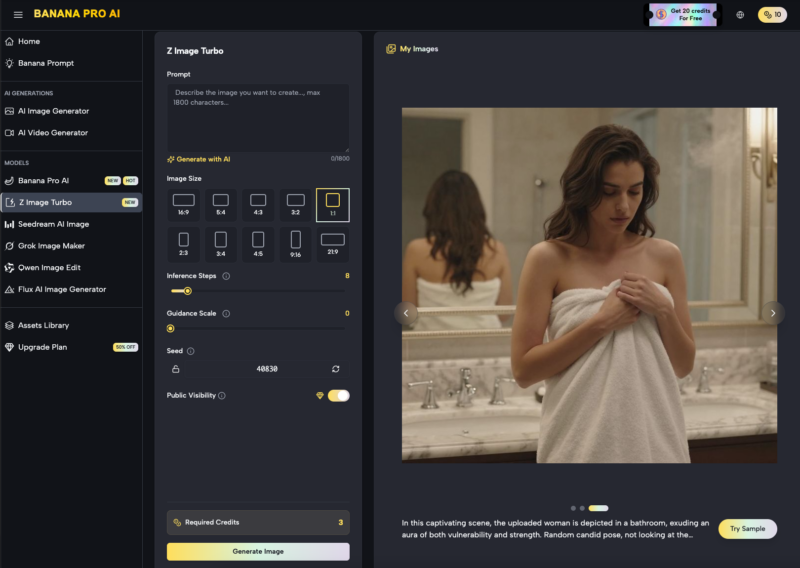

When Security Tools Hide Complexity

Good interfaces hide complexity. Security tools often hide too much. Users cannot see what matters. They see green checkmarks. Green does not mean safe. It means configured. Without understanding, teams trust the system blindly.

Enterprise Software and the Illusion of Safety

Big tools feel reliable. Big brands feel trustworthy. This creates an illusion. Size replaces scrutiny. Non-technical teams assume defaults are safe. They rarely are. Security depends on context. Defaults ignore context.

The Human Factor Is Not a Weakness

Humans are blamed for breaches. That misses the point. Systems should support users and not trick them. When tools fail users, users fail systems. This is a design failure. Not human failure.

Why Complexity Grows Over Time

Security tools evolve. Features accumulate. Old rules remain. New ones stack on top. No one cleans up. No one audits regularly. Complexity increases quietly. Risk grows silently.

Automation Without Understanding

Automation sounds safe. It feels modern. But automation removes visibility. Users stop watching. When automation breaks, no one knows why.

Automated Rules That No One Remembers

Rules are created during emergencies. They are never revisited. Over time, they conflict. No one understands the full system anymore.

Blind Trust in Automated Decisions

Automated actions feel objective. They are not. They reflect old assumptions. And outdated threats. Trust without review creates exposure.

When Automation Replaces Judgment

Judgment adapts. Automation does not. Security needs both. Too much automation removes thinking.

The Role of Leadership in Security Failure

Leaders approve tools. They do not use them. Decisions are based on reports, not experience. This disconnect matters. Problems go unseen. Security becomes performative. Not practical.

Better Security Starts With Less

Fewer tools help more. So do fewer alerts. Clear systems beat complex ones. Understanding beats coverage. Security improves when teams know what tools actually do.

Designing Security for Non-Technical Teams

Good security respects limits. It reduces decisions. Clear language matters. So does visibility. Tools should explain risk. Not just display status. Security works best when users feel confident and informed.

Rethinking What “Secure” Really Means

Secure does not mean tool-heavy. It means resilient. It means systems people understand. And can maintain. Non-technical teams are not the problem. The tools often are.