There’s something almost invisible about real-time technology when it works well. A message appears instantly, a live score updates without delay, and a transaction completes before you even think about it. No drama, no waiting, just quiet efficiency. But beneath that seamless surface sits a surprisingly complex ecosystem, designed to withstand enormous pressure. High-load digital platforms, think global streaming services, multiplayer games, or financial exchanges, don’t just process data. They choreograph millions of interactions simultaneously. And here’s the twist: users don’t care how hard it is. They expect it to just work. So what actually makes that possible?

The myth of “instant”

“Real-time” isn’t actually real time. Not quite. What users perceive as instant is typically anything under 100 milliseconds. That threshold comes from human cognitive studies. However, once delays creep past 250 milliseconds, people start noticing. At one second, frustration kicks in. Speed is often misunderstood. It’s not about how fast a system processes data overall, but how quickly it responds per interaction. This is where latency dominates. Consider this:

- A delay of 100 ms can reduce user engagement measurably

- Google once reported that 500 ms delays cut traffic by about 20 percent

- In financial trading, even microseconds can translate into millions

The system doesn’t need to be perfect; it needs to feel immediate.

Distributed systems

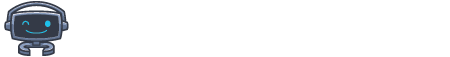

Right in the middle of heavy-duty platforms sits a networked setup. Tasks travel through many computers instead of sitting on just one box. Think about it like this. Pumping up a single device is vertical growth, whereas bringing in extra devices to split work happens horizontally. Most current systems lean hard into spreading out since broken parts won’t drag everything down. Betting platforms like 1xbet rely heavily on distributed infrastructure to handle spikes in user activity, especially during major live events. Spreading tech across locations brings an old problem called the CAP theorem. Engineers are forced to balance consistency and availability. Should a system always respond, even if the data is momentarily inconsistent? Or should it wait for perfect accuracy and risk delays? In practice, systems shift between these modes.

Event-driven architecture

Static systems are too slow for modern demands. Instead, many platforms now use event-driven architecture, where actions trigger immediate responses across the system.

Think of it like a domino effect, except the dominoes are services, queues, and processors reacting in parallel. A user action generates an event, which is then placed into a queue. From there, multiple independent services consume and process that event simultaneously. This separation allows systems to remain flexible and resilient. Message queues such as Kafka or RabbitMQ act as shock absorbers. When traffic spikes, they hold and distribute the load rather than letting the system break. Without this layer, even well-designed platforms would struggle to survive peak demand.

Real-time data processing

Old-school systems processed data in batches. That delay is no longer acceptable in environments where milliseconds matter. Modern platforms treat data as a continuous stream. The difference can be summarized quite simply:

- Batch processing delivers delayed, periodic updates

- Streaming delivers continuous, real-time updates

Streaming technologies such as Apache Kafka or Flink allow systems to react instantly. Here’s the less glamorous side. Real-time systems demand constant computing power and careful monitoring. True, the responsiveness is impressive, but the operational complexity behind it is easy to underestimate.

Load balancing

Most folks overlook load balancers, yet these tools decide if systems hold up or fail when stressed. They spread web traffic so no one machine gets swamped. Smart ones check how busy servers are, where they sit on the map, and if they’re alive – then send work accordingly.

Caching: Speed through memory

If latency is the enemy, caching is the shortcut that keeps everything moving smoothly. Instead of repeatedly querying the main database, systems store frequently accessed data in fast memory layers. This reduces response times and server load. Caching exists at multiple levels. Browsers can store data locally, CDNs place data closer to users, and systems like Redis keep frequently used information accessible. However, keeping cached data accurate remains a difficult challenge.

Observability: Watching systems in motion

Out of nowhere, heavy-duty systems act up. Sudden surges, breakdowns, odd slowdowns – none give advance notice. This is exactly when seeing inside matters most. Live data streams guide engineers, not guesses. Numbers flow through screens showing response times, glitches per second, how much gets processed, and power drawn. Patterns emerge only if someone keeps watching. Alerts hide in plain sight.

Conclusion

Most folks think instant replies happen by accident. Truth is, many moving parts must line up – network designs spread across zones, live data flows, alert frameworks clicking at once, pathways built to cut waiting time short. Funny things happen, though. When everything works better, nobody sees any of it. People do not clap for speed. They just assume things should move quickly. Behind every instant interaction sits a structure built to handle chaos, absorb pressure, and respond without hesitation. Not flawless, but effective.